In search preferences section, you will see 3 area that need an advance blogging knowledge to play with. They are meta tags, errors/redirections, and crawlers & indexing. In this post I’m gonna try to give you an easy explanation about what is custom robots txt use for.

Robots.txt is a command path for search engine to crawl or not to crawl a specific path or content on your blog or site. Its function is like a filter. Every blogspot site have a default robots.txt like below:

User-agent: Mediapartners-Google

Disallow:

User-agent: *

Disallow: /search

Allow: /

Sitemap: http://blogname.blogspot.com/feeds/posts/default?orderby=UPDATED

If you want to see yours, go to your browser address bar and type:

http://yourblogname.blogspot.com/robots.txt

What Are These Lines Means?.

User-agent: Mediapartners-Google

– Google Adsense Robot will crawl your blog content. If you add adsense on your blog, this robot will help your blog get the right advertise display on your pages.

Disallow:

– Command that tell SE robot to not visit this pages, post, or categories. There is no / sign after that, so it’s mean GA robot can crawl all your content.

User-agent: *

– All internet search engine robot.

Disallow: /search

– Robot have no permission to crawl folder search such as /search/label and search/search?updated, etc. Why?, because label is not a real url. Google wants you search topic from search engine box, not just click randomly on label or categories. This also to avoiding duplicate content.

Allow: /

– Allow all pages to get crawl except the path on disallow above.

Sitemap: http://blogname.blogspot.com/feeds/posts/default?orderby=UPDATED

– Your blog feed address.

How to Prevent a Certain Page?.

If you have been blogging for quite long time, there is some point, you want to block robots to crawl a certain page. Maybe it’s a private content or top secret that you just want to share with your co-worker. On user-agent: *, disallow: /search part, you can add this example path below:

User-agent: *

Disallow: /search

Disallow: /p/secret-1.html

Disallow: /p/secret-2.html

Allow: /

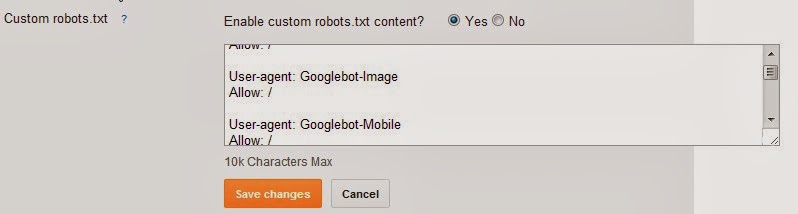

The image below is an example friendly robots.txt for blogspot. If you want to change it like below, go to settings —> search preferences —> crawlers and indexing —> enable custom robots.txt. Don’t forget to save changes when you done.

Click on the image for larger size.

http://www.geekdk.com please suggest me a full robot txt file for given domain.

just use a friendly robot.txt that show on last image above.